Introducing Tinfoil Containers

At Tinfoil, we've been running AI models inside secure enclaves since day one. Our Private Inference API gives you an easy way to use models with verifiable privacy. But if you're building an application, inference is usually just one piece of the puzzle.

When you're building a full-stack, end-to-end private AI application, you need more than just a model endpoint. You need auth servers, business logic, orchestration layers, the agent that decides when to call the model and what to do with the response. There's no point securing only inference if the rest of your pipeline remains unencrypted.

And if you're running more custom AI workloads — custom models, training, finetuning — our out-of-the-box inference endpoints aren't going to be enough.

What you need is the ability to run your own code in a CPU-only or GPU-based enclave, with the same strong security and privacy guarantees that Tinfoil's Private Inference API provides you now.

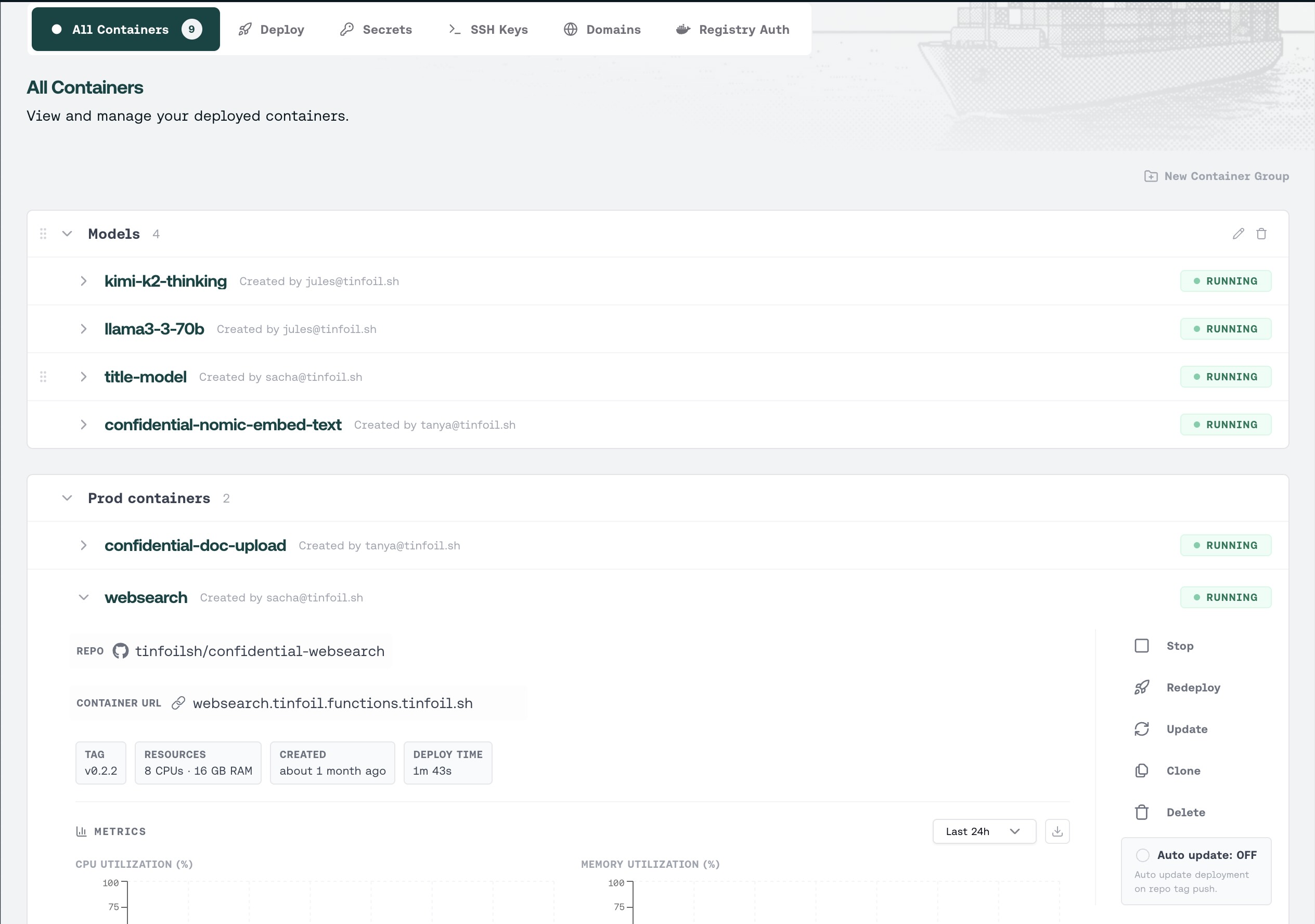

That's why we're launching Tinfoil Containers. Tinfoil Containers lets you deploy any Docker container inside a secure enclave, with the same infrastructure and hardware-backed security guarantees we use for our own inference platform.

Why enclaves have seen little adoption

Secure enclaves have been around for almost a decade. The vision is seriously compelling: a workload running on a machine you do not control, that cannot be accessed by anyone but you. A workload running on a machine that could be actively trying to exfiltrate its secrets, protected in all but the most extreme cases.

But in the real world, no one really uses enclaves. And if they do, it's usually an enterprise compliance checkbox, with the majority of the security coming from hiding in additional complexity.

If you've actually tried to deploy something in an enclave, you know. And forget about GPUs.

Let's start with Intel. Intel SGX was the first widely available enclave technology. It required you to partition your application into "trusted" and "untrusted" components, rewrite significant chunks of code, and fit everything into a constrained memory region. Intel TDX, released two years ago, was a massive improvement. With TDX, you could deploy your entire OS into a confidential VM. In practice, the way most people access this technology is through Azure, which offers Confidential Containers with a more familiar container interface. Yet it's shrouded in unnecessarily confusing and contradictory trust models.

As Erik Chi, who's been building The Open Anonymity Project at Stanford and UMich, put it:

"We were using Azure's confidential containers (ACI) which is a nightmare to set up correctly, from TLS certificate binding, hardware measurements, reproducible image digests, etc. We can do the same thing on Tinfoil Containers in less than 20 minutes with the nice attestation SDK, clear docs, debug mode, almost zero update downtime, and transparent architecture that everyone can audit."

Another option that's seen slightly more adoption is AWS Nitro Enclaves. But they come with their own maze of vsock networking, enclave image formats, and tooling that assumes you have a team of infrastructure engineers with nothing else to do.

All of these options are very much designed for the enterprise, and often become a box-checking exercise rather than a genuine security architecture. Azure CVM, for instance, uses a proprietary trust service for attestation. This doesn't make sense if the goal is to remove the need to trust the cloud provider.

And this is merely the state of affairs for CPUs.

NVIDIA Confidential Computing

For GPUs, the state is even more dire. There are only two providers that offer GPU confidential VMs. One is Azure, which is even worse to use when GPUs are involved (we never managed to get it set up, even though we tried for xx weeks), and the only GPU supported is a single H100. GCP's confidential VM with GPU support is somewhat better from a usability perspective (we tested it and ran experiments on it), but has been in private preview for a while and also only offers a single H100.

Tinfoil was the first provider to offer multi-GPU confidential computing. We had to do this ourselves on bare metal hardware because we wanted to support real open-source models that require multi-GPU setups, not toy models. Now we're making this capability available to our customers to easily deploy GPU-based enclaves.

Rudolf Laine at Workshop Labs, who's building a private finetuning product on Tinfoil, put it well:

"We have fast deployment cycles for servers that we run on Tinfoil TEEs to guarantee customer privacy. Tinfoil Containers makes the TEE deployment friction almost nonexistent and lets us iterate quickly. It's an important step towards the future where most ML workloads are secured by running on verifiably-private TEEs."

NVIDIA's latest chips support multi-GPU confidential computing, and with Vera Rubin, enclaves that span nodes. This is particularly relevant for training. We want to bring this capability to our customers as soon as possible without being bottlenecked on cloud providers, so they can run large training runs fully privately and without being subjected to enclave configuration hell.

What Tinfoil Containers provides

Tinfoil Containers lets you take any Docker image and deploy it into a secure enclave backed by AMD SEV-SNP or Intel TDX hardware, optionally with NVIDIA Confidential Computing for GPU workloads. Memory is encrypted at the hardware level. The host can't see in. We can't see in. The confidential VM runs your container alongside a TLS-terminating shim, so traffic is encrypted end-to-end from the client into the enclave.

The deployment model is designed around a simple loop:

You start from a template repo that gives you a tinfoil-config.yml. You point it at your Docker image, pinned by SHA256 digest, set your CPU and memory, declare your secrets and environment variables. You push a Git tag. Each tag creates an auditable record in the Sigstore transparency log. Your container comes up at https://<name>.<org>.containers.tinfoil.sh.

On every connection, our SDKs verify the enclave's attestation report — confirming the hardware is genuine, the code matches what's published in the transparency log, and nothing has been tampered with. If anything doesn't check out, the connection fails. This is what makes privacy verifiable rather than promised.

Who this is for

The people we've been working with fall into a few categories.

There are AI developers who need to run orchestration logic alongside private inference. An agent that calls a model, processes the response, hits an external API, and returns a result — all of that needs to be inside the trust boundary if you care about privacy. Calling a private inference API from an unprotected server kind of defeats the purpose.

There are startups building products where privacy is the product. Health data, legal tech, financial services, anything where your users need to trust you with sensitive information and "we promise we won't look" isn't a satisfying answer. Hardware attestation is a much better answer.

And there are researchers who are trying to do research in cryptography, end-to-end security, verifiable AI safety and governance, amongst others. There are guarantees you can get using an attested enclave. But you don't want to spend a ton of time on the enclave configuration as that might be orthogonal to the main project. Tinfoil Containers allows you to quickly get up and running with an enclave for your experiments. As Darya Kaviani at UC Berkeley put it:

"Running our own custom Docker container on Tinfoil Containers is a major unlock. It lets us run our full end-to-end system in trusted hardware using the same simple Python SDK we already use to call Tinfoil's embedding and LLM models. Serverless enclaves have finally arrived!"

Everything you'd expect

We built this with every feature we wished existed when we were deploying our own services. You can bring any Docker image — no special SDK inside the container, no rewriting your application. Our SDKs in Python, Go, and TypeScript handle client-side attestation automatically. Updates are blue-green with zero downtime: push a new tag, start the update, and we spin up the new version alongside the old one, switching traffic only after health checks pass. There's a debug mode that gives you a separate environment with SSH access, completely independent from production, so you can actually troubleshoot without guessing. Secrets are stored encrypted and injected at runtime. You can pull from private registries. You can use custom domains. GPU support is there for workloads that need NVIDIA confidential computing on H200 and B200 hardware. Health checks and system metrics are built in.

It's the kind of feature set where each item individually seems obvious and table-stakes, but if you've tried to get any of this working in other enclave platforms, you know that "obvious" and "actually exists" are different things.

What's next

We're just getting started. We think there's a long road ahead to make TEEs more usable, accessible, and secure, and to develop best practices.

If you're running sensitive workloads and the current state of affairs — trusting a chain of providers to honor their privacy policies — makes you uneasy, try it. The quickstart shouldn't take more than a few minutes.

The hardware to enforce privacy at the lowest level has existed for years. The problem was always that using it required mass amounts of suffering. We think that's finally over.

Please reach out to us at contact@tinfoil.sh if you'd like to get started with Tinfoil Containers!

Subscribe for Updates

RSS FeedStay up to date with our latest blog posts and announcements.